Again, AntsDB tops at TPC-C benchmark

Introduction

TPC Benchmark C is an on-line transaction processing (OLTP) benchmark. It is an international standard to evaluate database performance defined by the industry leaders such as Oracle, IBM and Microsoft.

The benchmark is centered around the principal activities (transactions) of an order-entry environment. These transactions include entering and delivering orders, recording payments, checking the status of orders, and monitoring the level of stock at the warehouses. While the benchmark portrays the activity of a wholesale supplier, TPC-C is not limited to the activity of any particular business segment, but, rather represents any industry that must manage, sell, or distribute a product or service.

Benchmark Methods

The benchmark used 100 warehouses with 50M records in total. TPC-C involves a mix of five concurrent transactions of different types and complexity either executed on-line or queued for deferred execution. It does so by exercising a breadth of system components associated with such environments. TPC-C performance is measured in new-order transactions per minute.

TPC-C is an industry standard. There is a number of implementations available. In this test, we used BenchmarkSQL 4.1.1, a popular TPC-C implementation developed by the PostgreSQL community. It supports not only PostgreSQL, but also MySQL and a couple of other databases. Thus it gives us a fair comparison.

Benchmark Setup

The benchmark was performed on Amazon AWS EC2 i3.4xlarge instance. Below is the summary of the system configuration.

| CPU | Intel(R) Xeon(R) CPU E5-2686 v4 @ 2.30GHz, 8 cores, 16 threads |

| Memory | 122 GiB |

| Storage | 1.7T * 2 NVMe SSD |

| Operating System | CentOS 7.4.1708 |

| Comparing Database | MySQL 5.5.56, PostgreSQL 9.2.23, Database O 12.2.0.1.0, AntsDB 18.05.02 |

| Java | 1.8.0_161-b14 |

Database Configuration

| AntsDB | humpback.space.file.size=256 humpback.tablet.file.size=1073741824 |

| MySQL | innodb_file_per_table=true innodb_file_format=Barracuda innodb_buffer_pool_size=50G innodb_flush_log_at_trx_commit=0 max_connections=200 |

| PostgreSQL | shared_buffers = 30GB bgwriter_delay = 10ms synchronous_commit = off effective_cache_size = 60GB |

| Database O | MEMORY_TARGET=50g |

Result

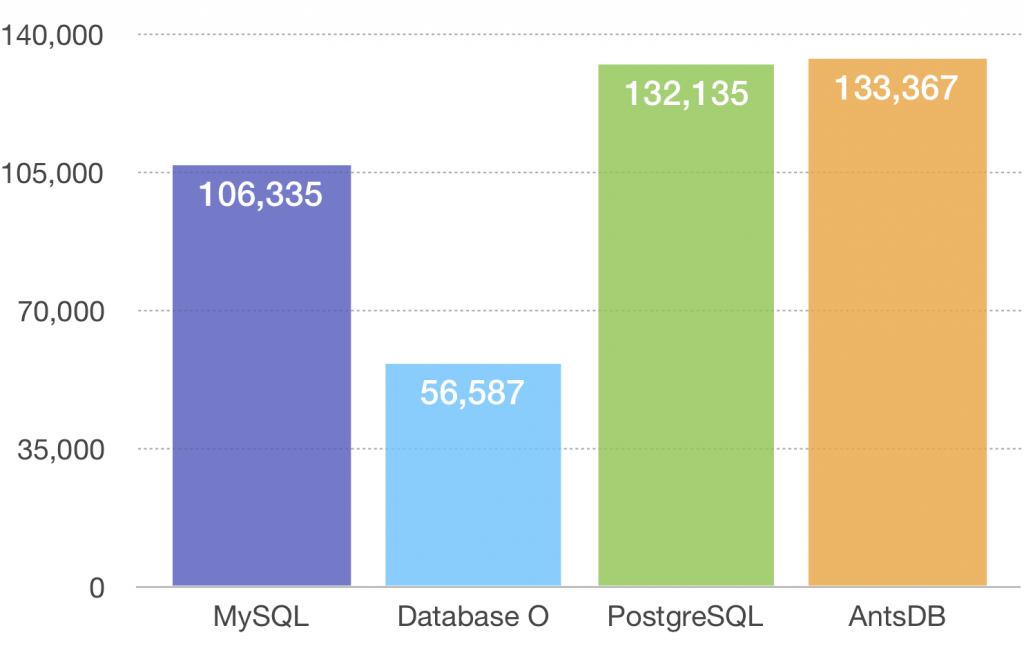

Below is the result from benchmarks, measured in tpmC – transaction per minute.

Conclusion

TPC-C is a more complex benchmark than YCSB. While YCSB simulates simple raw reads and writes, TPC-C weights more in complex transactions. It simulates a real world purchasing workflow. To achieve this, TPC-C implementation needs to use row locks and manual transactions to ensure data integrity. Once again, this test proves that AntsDB has a very efficient locking and transaction algorithm.

Due to the resource constraint, both the database server and test software ran on the same hardware so that part of the system resource were consumed by the benchmarking program. It is less perfect because in reality applications and database are likely to run on different machines. However we are expecting better performance with a dedicated database server.

2 Comments